VGGNet Explained: How Deep Learning Models See Images Through Feature Maps

When humans look at an image, we instantly recognize objects, shapes, colors, and meaning. If we see a cat, we know it is a cat without thinking about pixels, edges, textures, or mathematical representations.

But a deep learning model does not see the world the same way.

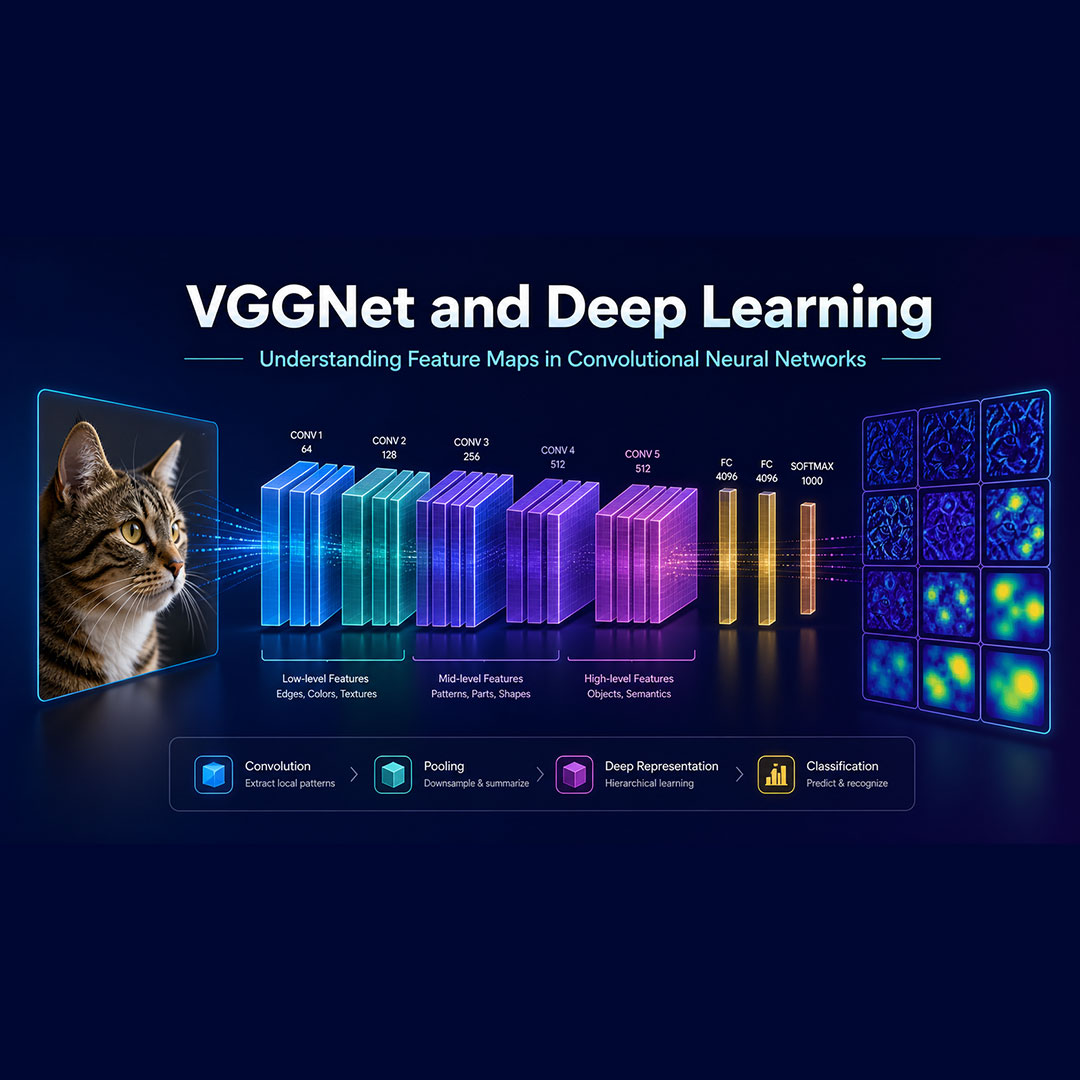

A convolutional neural network, or CNN, learns from images by transforming raw pixels into increasingly meaningful feature representations. One of the most famous architectures that helped popularize this idea is VGGNet.

VGGNet is a landmark deep learning model in computer vision. It became well known because of its simple but powerful design: using many small convolution filters stacked deeply to learn visual patterns. Even though modern architectures have become more advanced, VGGNet remains one of the best models for understanding how CNNs process images.

In this article, we will explain what VGGNet is, how feature maps work, and why this architecture is still important for anyone learning deep learning and computer vision.

What Is VGGNet?

VGGNet is a convolutional neural network architecture developed for image recognition tasks. It is especially known for using small 3 × 3 convolution filters throughout the network.

Instead of using large filters, VGGNet stacks multiple small convolution layers. This allows the model to build deeper representations while keeping the architecture clean and consistent.

The most famous versions are:

| Model | Number of Weight Layers |

|---|---|

| VGG16 | 16 layers |

| VGG19 | 19 layers |

The core idea behind VGGNet is simple:

A deep network with small filters can learn powerful visual features.

This made VGGNet influential because it showed that increasing network depth could significantly improve image classification performance when designed properly.

Why VGGNet Matters in Deep Learning

VGGNet is important because it helps us understand one of the central ideas in deep learning:

Neural networks learn representations.

A CNN does not simply memorize an image. Instead, it learns multiple levels of abstraction.

For example, when processing an image of a cat, early layers may detect simple visual patterns such as edges or color changes. Middle layers may detect textures, eyes, ears, or fur-like patterns. Deeper layers may recognize more meaningful object-level structures.

This layered learning process is what makes deep learning powerful.

The model starts with raw pixels and gradually builds a more useful representation for prediction.

What Are Feature Maps in CNNs?

A feature map is the output produced by a convolutional layer.

In simple terms, a feature map shows what a specific filter detects in an image. Each filter learns to respond to a certain type of visual pattern.

For example, one filter may respond strongly to vertical edges. Another may respond to curves. Another may detect textures. As the network gets deeper, filters begin to respond to more complex patterns.

A feature map is usually represented as a 3D volume:

Height × Width × Channels

Here:

- Height and Width represent the spatial size of the feature map.

- Channels represent different learned filters or visual patterns.

So, if a layer has 64 channels, that means the layer is learning 64 different feature representations from the input.

This is one of the most important concepts in convolutional neural networks.

The image is not processed as one single object. It is transformed into many channels of learned information.

How VGGNet Sees an Image

Let’s imagine we give VGGNet an image of a cat.

At the beginning, the model receives raw pixel values. These pixels contain color and intensity information, but they do not yet contain meaning.

After the first few convolution layers, VGGNet begins detecting low-level features such as:

- edges

- corners

- brightness changes

- simple color patterns

After more layers, the network starts combining these simple features into more complex patterns, such as:

- eyes

- whiskers

- fur texture

- rounded shapes

- object boundaries

Near the final layers, VGGNet learns high-level semantic features. These features are useful for classification.

At this point, the network is no longer thinking in terms of pixels. It is working with learned representations that help answer the final question:

What object is in this image?

This is how deep learning converts raw visual data into intelligent predictions.

Understanding Feature Map Notation

In deep learning mathematics, a feature map can be represented as a matrix.

Suppose a feature map has height H, width W, and C channels. We can flatten the spatial dimensions so that:

N = H × W

Then the feature map can be represented as:

X ∈ R^(HW × C)

This means each row represents one pixel location, and each column represents one channel.

Another way to write this is:

X = [x1, x2, ..., xC]

where each xc represents the vectorized feature map for the c-th channel.

We can also view it row by row:

Each row represents the channel-dimensional feature vector at a specific pixel location.

This notation is useful because it helps researchers and engineers analyze how normalization, convolution, and representation learning work inside CNNs.

In simple words:

Each location in the feature map has a vector that describes what the network has learned at that position.

Why Feature Maps Are Important

Feature maps are important because they reveal how a CNN learns.

They help us understand:

-

What the model detects

Different channels respond to different visual patterns.

-

How information changes across layers

Early layers detect simple patterns, while deeper layers detect complex concepts.

-

Why deeper networks perform better

More layers allow the model to build richer representations.

-

How computer vision models make predictions

Predictions are based on learned visual features, not raw pixels alone.

This is why visualizing feature maps is one of the best ways to understand convolutional neural networks.

VGGNet Architecture in Simple Terms

The VGGNet architecture follows a very consistent structure.

It mainly uses:

3 × 3convolution layers- ReLU activation functions

- max pooling layers

- fully connected layers

- softmax output for classification

A simplified flow looks like this:

Input Image → Convolution Layers → Feature Maps → Pooling → More Convolutions → Fully Connected Layers → Classification

The repeated convolution blocks help the network learn increasingly complex visual features.

The pooling layers reduce the spatial size of the feature maps, making the network more efficient and helping it focus on important patterns.

At the end, fully connected layers use the learned features to classify the image.

VGG16 vs VGG19

The two most popular VGGNet variants are VGG16 and VGG19.

VGG16 has 16 weight layers, while VGG19 has 19 weight layers.

Both architectures follow the same general design philosophy. The main difference is depth.

VGG19 is deeper, but VGG16 is often more commonly used because it provides a strong balance between performance and computational cost.

For many learning and transfer learning projects, VGG16 remains a popular choice.

VGGNet and Transfer Learning

One reason VGGNet is still widely used is transfer learning.

In transfer learning, a model trained on a large dataset is reused for a new task.

For example, instead of training a CNN from scratch, you can use a pretrained VGG16 model and fine-tune it for a custom image classification problem.

This is useful for tasks such as:

- medical image classification

- plant disease detection

- face feature analysis

- product image recognition

- animal classification

- defect detection in manufacturing

The early layers of VGGNet learn general visual features like edges and textures. These features are useful across many different image tasks.

That is why pretrained CNNs are so powerful.

Advantages of VGGNet

VGGNet has several advantages:

- It is easy to understand.

- It uses a clean and consistent architecture.

- It performs well for image classification.

- It is useful for transfer learning.

- It is excellent for learning CNN fundamentals.

For students, researchers, and machine learning developers, VGGNet is one of the best architectures to study first.

Limitations of VGGNet

VGGNet is powerful, but it also has limitations.

The main issue is that it has many parameters, especially in the fully connected layers. This makes it computationally expensive compared with many modern architectures.

Newer models such as ResNet, EfficientNet, and Vision Transformers are often more efficient or more accurate for advanced computer vision tasks.

However, VGGNet remains valuable because its architecture is simple, visual, and easy to interpret.

Final Thoughts

VGGNet is more than just an old deep learning architecture.

It is one of the best ways to understand how convolutional neural networks learn from images.

The most important lesson from VGGNet is this:

Deep learning models do not see images as humans do. They learn layered feature representations.

A CNN transforms pixels into edges, edges into textures, textures into object parts, and object parts into predictions.

That is the power of feature maps.

And that is why VGGNet remains an essential architecture for anyone serious about deep learning, computer vision, and artificial intelligence.