🚀 Introduction: Where Geometry Meets Machine Learning

What if the secret behind powerful machine learning models wasn’t just code—but geometry?

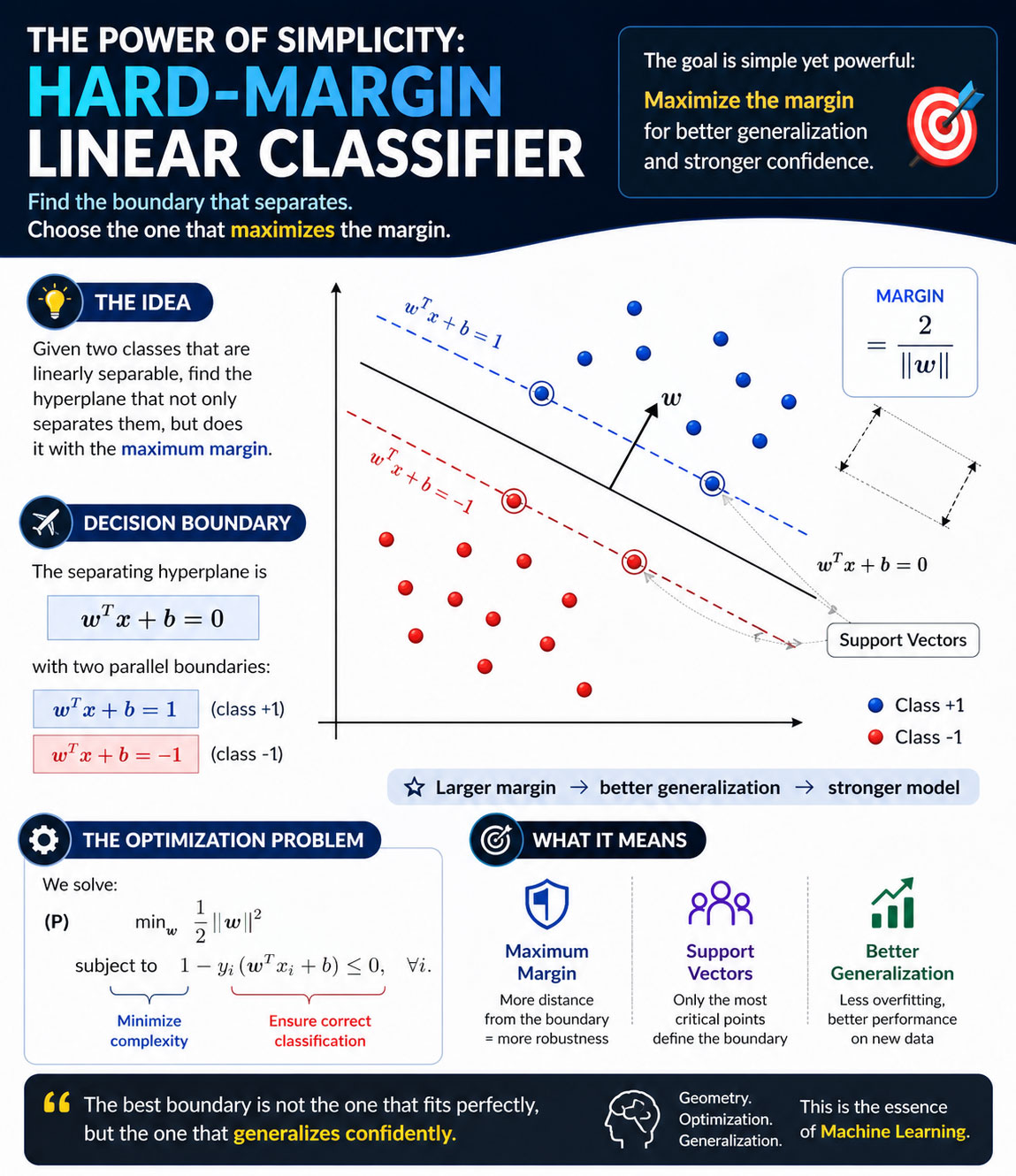

The Hard-Margin Linear Classifier is one of the cleanest and most elegant ideas in machine learning. It shows how we can separate data perfectly using a line (or hyperplane) while maximizing confidence.

This concept is the foundation of Support Vector Machines (SVMs) and plays a crucial role in understanding how models generalize.

📐 What Is a Hard-Margin Linear Classifier?

A Hard-Margin Linear Classifier assumes that your data is perfectly linearly separable.

This means:

- You can draw a line (or hyperplane) that separates two classes

- No misclassifications are allowed

The decision boundary is defined as:

w^T x + b= 0

Where:

- w = weight vector

- b = bias

- x = input data

🎯 The Key Idea: Maximize the Margin

Not all separating lines are equal.

The goal is to choose the one that maximizes the margin, which is the distance between the two classes.

👉 The margin is:

margin = 2 / ∥w∥

💡 Insight:

- Smaller → Larger margin

- Larger margin → Better generalization

🔥 Why Maximizing Margin Matters

Maximizing the margin leads to:

- ✅ Better performance on unseen data

- ✅ More robust decision boundaries

- ✅ Reduced overfitting

In simple terms:

The model doesn’t just separate the data — it does it with confidence.

⭐ Support Vectors: The Most Important Data Points

Only a few points actually define the decision boundary.

These are called support vectors.

- They lie closest to the boundary

- They determine the margin

- Removing them changes the model

💡 Think of them as the critical structure of your dataset.

⚙️ Optimization Problem

The classifier is built by solving:

min_w (1/2 ∥w∥^2)

Subject to:

y_i(w^T x_i + b)≥1

This ensures:

- Correct classification

- Maximum margin

🎸 A Creative Perspective (Your Brand Voice)

As someone working at the intersection of machine learning, music, and geometry, I see this idea everywhere.

- In music → we separate noise from clarity

- In ML → we separate classes with confidence

- In life → we seek the most stable decisions

The Hard-Margin Classifier teaches us:

The best boundary is not the closest fit — it’s the one that generalizes best.

🔍 When Should You Use It?

Use Hard-Margin when:

- Data is perfectly separable

- You want a clean theoretical model

Avoid it when:

- Data has noise

- Classes overlap (use Soft Margin instead)

📈 Final Thoughts

The Hard-Margin Linear Classifier is more than a model.

It’s a reminder that:

- Simplicity can be powerful

- Geometry drives intelligence

- Optimization creates robustness